The Nobel Prize was recently awarded to economists Abhijit Banerjee and Esther Duflo of MIT and Michael Kremer of Harvard. NPR described the researchers’ work as “applying the scientific method to an enterprise that, until recently, was largely based on gut instincts.”

An enterprise based on gut instincts? That sounds like education! The Institute of Education Sciences (IES), the arm of the U.S. Department of Education charged with providing reliable information about the effectiveness of education programs, is not even old enough to vote. It was not until 2015 that evidence was given much attention in federal education law, and state plans submitted under the Every Student Succeeds Act “mostly ignored research on what works,” according to Pemberton Research founder Mark Dynarski.

But attention to experimental evidence has been growing. The Nobel Prize press release specifically mentioned the use of Randomized Control Trial (RCT) experiments to inform social policy intended to alleviate poverty. RCTs are studies that randomly assign individuals to an intervention group or to a control group in order to measure the effects of the intervention (for a visual, see here). RCTs are considered the strongest form of evidence by IES and under the Every Student Succeeds Act. Examples of RCTs in the education field include some of the Nobel prize winners’ work in India, and IES’s What Works Clearinghouse, which catalogues the evidence base for education interventions.

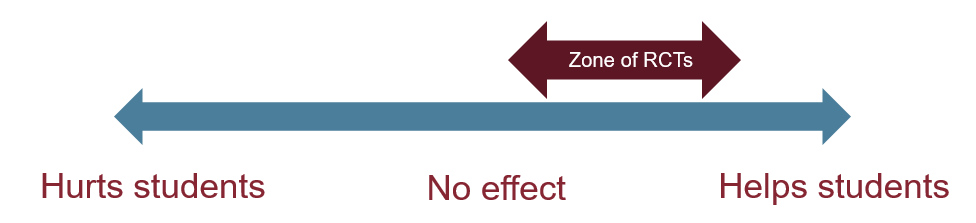

Questions on causality — that is, when we want to know if some policy or program causes some outcome — are best served by experiments, at least for narrowly defined research questions. Yet the idea of experimenting on students (especially low-income or low-achieving students) can make people feel queasy, and so it is worth asking in what circumstances it makes sense to conduct an experimental study.

An experiment might make sense if we believe a policy or practice has some positive impact on people, but we’re not sure about the size of the impact. Researchers should not experiment if they have reason to believe a policy or practice to be harmful, because the students in the “treatment” group would be harmed. Nor should researchers experiment if they are fairly confident that it is beneficial; in that case, students who were assigned to the “control” group would be harmed by being deprived of the treatment. See my simple graphic explanation below:

Inspired by a tweet from Vinay Prasad, Associate Professor of Medicine at Oregon Health and Science University

To get some sense of where education policies and programs fall on this spectrum, researchers and policymakers can look to other forms of evidence. For example, a school district that is selecting a new curriculum might conduct a survey of parents, students, and educators for input on the strengths and weaknesses of the options. Interviews or focus groups with teachers about their perceptions of various curricula could help policymakers identify whether some options might be more feasible to adopt than others. A curriculum that requires an extensive amount of additional professional development could do more harm than good if the district is unable to provide the support needed. In this way, input from educators, parents, and students can increase the chances of selecting an option that will benefit students.

Qualitative and descriptive quantitative research, which do not involve experiments, can support the design of programs that help students. For example, the African American Male Achievement program in Oakland builds on qualitative research. The programs’ approach is supported by descriptive quantitative studies that demonstrate the benefits of Black students having Black teachers with regard to teachers’ expectations as well as student attendance, assignment to gifted programs, suspensions, and college enrollment. Qualitative studies of the program highlighted peer-based support systems and a pedagogy of care paired with high expectations as promising practices. A recent study of the program complemented prior work by providing evidence that the program reduced the number of high school dropouts.

Multiple forms of evidence to contribute to a broader understanding of what makes the program effective, shedding light on what resources or contextual factors might make the program particularly likely to succeed (or not) in other contexts. Combined, the evidence around the African American Male Achievement program makes a strong case for such programs, putting it in the “zone of RCTs.” If district leaders are uncertain about whether to invest in a similar program, they might consider a small-scale experiment to assess the size of the impact.

Bellwether’s team has provided evaluation support for four recipients of the federal Charter School Program grants, and each of these projects includes multiple forms of evidence. We conducted interviews, observations, and focus groups in addition to quasi-experimental analyses of student achievement. In combination, these various forms of evidence can inform continuous improvement as the number of schools in the networks expand. Ultimately, our goal is to ensure that evaluation is used in service to the continuous improvement of education, using a variety of methods that are appropriate given the questions stakeholders have.